The architecture concept that is currently most favored in Big Data environment is the Lambda Architecture. It is a modern concept that is always used when raw data and their calculations need to be reset to any point in time and must be reprocessed again.

The Lambda Architecture permits, for example, correcting an incorrect application, redeploying and thus having all processes rerun from the point in time of the incorrect execution, without impairing the overall system’s consistency.

Kappa Architecture, which simply put corresponds to the Lambda Architecture without batch processing, is an expansion of the Lambda Architecture.

Both architectural approaches are excellent and widespread solutions, which, however, require a lot of development, administration, storage capacity, and 100% data provenance. A more detailed explanation can be found in the section Streaming Architecture.

In order to offer our customers an architecture solution that processes constant data flows of widely differing sizes, handles ad hoc adjustments as well as alternating load scenarios and requires less administration, we have designed the BOSON Architecture.

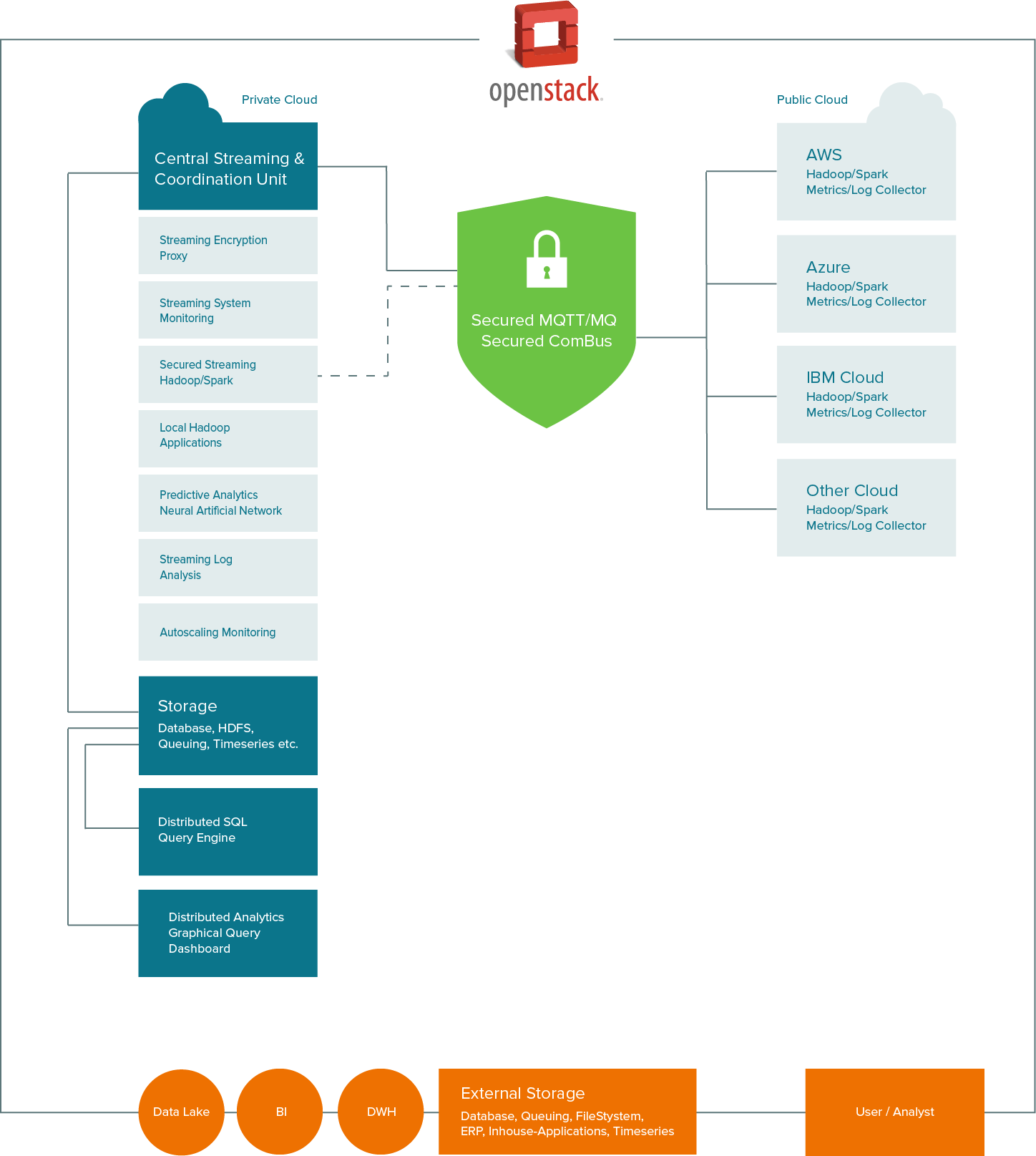

The goal of the BOSON Architecture is to design a business architecture on the basis of these requirements that is clear, easy to administer, error-tolerant, and self-monitoring. At the same time, the architecture makes it possible to administer and to connect everything with each other from data acquisition and data processing (aggregation, reporting, etc.) to the storage in Data Lakes up to evaluation by BI (business intelligence) and predictive analytics. In addition, it is designed to meet the highest security requirements both outwardly and inwardly.

The core of the BOSON Architecture is a central streaming and coordination unit that controls all processes.

The basis of the BOSON Architecture is the Integrated Hybrid Infrastructure (IHI), which provides the framework for error tolerance, security, cloud, on demand compute, and the distributed application flow.

The following five main components form the BOSON Architecture, which is secure, reactive, cost-effective, and self-monitoring. Depending on customer requirements, these components can be individually defined.

Agile methods such as scrum or kanban are standard today in almost all companies in development and in project management. Agile action allows for rapid action or reaction to changes.

For this purpose, we use the term Business Driven Process Management. BDPM means rapid adaptation of business processes to market requirements. The focus of this approach is the requirement that one component in the business process can be replaced at any time by another component without the business process having to be interrupted or stopped. It is important for our customers that no knowledge of the overall infrastructure is required to replace the components. Components, such as a database or a new or additional BI/analytics system, can be replaced or added without knowing or having to consider the dependencies of the business processes.

Data flows constantly changing in volume and content generate an unforeseeable load on the resources available. Companies must be able to react. Two possible approaches are relevant in practice for this purpose.

One approach requires that one's own computing power is calculated so that in normal operation a maximum of 60% of computing capacity is needed. Increasing load is offset by the previously unused capacity. This works as long as a maximum of 95% of the available capacity is used. If load continues to be generated, the system collapses. Therefore, the system must either be constantly monitored and new capacities added or the risk must be taken that business processes stop or are not finished on time. This leads either to an increase in fixed cost or to incalculable process runtimes.

The second approach, which forms a main component of the BOSON Architecture, is the use of a hybrid cloud solution with On Demand Compute. Here, the company uses its computing capacities in the private cloud and can reduce them to the extent that in normal operation 80% of the capacities are used. The load on the system is monitored by means of intelligent monitoring and modern analytical methods, and a prediction is made on the behavior of the system in the next few minutes. If system use exceeds a (freely definable) threshold value of, for example, 90%, then automatically (on demand) capacities are added from the public cloud.

The public cloud serves to provide computing support (compute) or to relieve the system.

All data remain in the company within the private cloud. Sensitive data, which must be transferred into the public cloud, can be encrypted and decrypted again in a fully transparent manner via a proxy service.

In many Big Data environments, data are stored on the (HDFS) file system or in databases and then evaluated via distributed jobs. One disadvantage of this method is the storage of the data itself. Data storage is becoming less expensive as a result of rapid development. Data volume increases, however. Whereas previously hundreds of megabytes of data were stored, today it is gigabytes to terabytes. The storing of these data increases fixed cost due to the number of hard drives required, connected with increased power consumption, more storage space and more administrative tasks, as well as additional employees. In parallel, processing time increases, since data must be transferred from data storage for evaluation. Over time, data quantity becomes so large that data delivery (the reading of the data) lasts longer than processing itself and thus rapid evaluation is no longer possible.

In most cases, an intermediate storage of data streams is not necessary, since the raw data themselves are usable only just in time. IP addresses are one example. The calculation of the geographical data of an IP address will very likely provide a different location in the future. Transformation or aggregation of the raw data is therefore often important in order to obtain the correct information at a later point in time from these data and thus the correct results.

This approach has a positive impact on storage space requirement for compliance with data provenance.

Modern Data Lakes therefore no longer store all information, but rather only transformed or aggregated data and thus avoid the occurrence of Dark Data. The avoidance of dark data saves storage space and reduces the risk that previously stored information is useless today or in the meantime may no longer be legally stored. The result is a Data Lake with Smart Data.

With a Streaming Architecture, data flow fail-safe from the source to the Data Lake through the company and are transformed or aggregated at suitable points. An interruption of the dataflow as a result of the failure of a process step (network, software error, etc.) has no effects on the process.

A secure and easy-to-administer hybrid cloud environment is required for On Demand Compute.

Sensitive data are stored and processed in the private cloud. The public cloud serves the purpose of increasing computing power and stores no data itself. Sensitive data are encrypted before being transferred to the public cloud for calculation. Proxies (communication interfaces) serve this purpose, which encrypt sensitive data on the fly. An encryption proxy, which changes the encryption algorithm and the key in configurable intervals, secures sensitive data before being sent to the public cloud for calculation. The results of the public cloud are automatically decrypted by a decryption proxy as soon as they have arrived back in the private cloud.

Predictive analytics comprises a wide field of statistical methods, which among other things includes predictive modelling and data mining. These methods analyze current and historical data in order to calculate predictions on future events or trends.

Modern predictive analytics is assisted by neural networks. Neural networks (artificial neural networks) assist in pattern recognition, in finding outliers, in cluster search and much more. The advantage of neural networks lies in the fact that they are able to recognize completely new connections or even to express assumptions independently and in part to ask questions about them.

Through the combination of predictive analytics and neural networks, all data are evaluated by means of machine learning, are associated and then evaluated by means of statistical methods. The results are more precise and meaningful than with previously existing systems.

Our reference implementation of the BOSON Architecture is designed specifically for security and the use of open source software. The Integrated Hybrid Infrastructure forms the framework of the implementation on the basis of OpenStack.

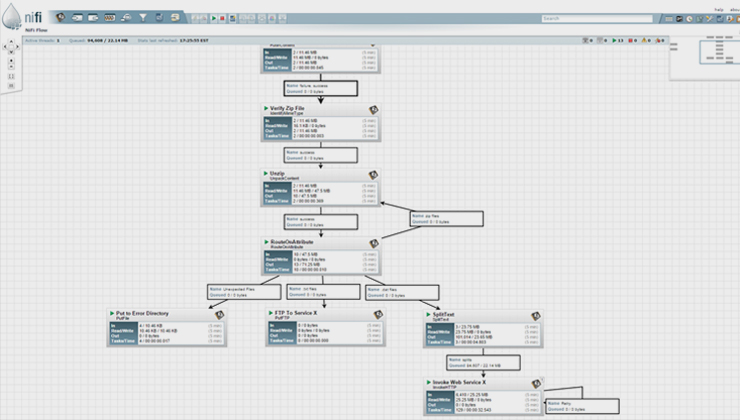

The core of the architecture is Apache NiFi. Apache NiFi receives all data flows and processes or forwards them. During an interruption, NiFi automatically pays attention to intermediate storage (queuing) of the data flows and ensures by means of Backpressure that no system is overloaded and thus does not fail. NiFi offers a large number of finished micro services and an easy framework for expansion. This saves development cost, since fewer Java, Scala or Python developers are required.

Apache NiFi serves as central streaming and coordination unit that makes it simple to implement CEP requirements.

Apache NiFi Copyright © 2015 The Apache Software Foundation

Integrated Hybrid Infrastructure with OpenStack basis

The topic of security plays a special role in several areas. On the one hand, only authorized persons may obtain access to data within the IHI and, on the other hand, the infrastructure must seal off individual areas from each other even within one company.

For this purpose, we use the security possibilities of OpenStack and Hadoop, in order to secure the infrastructure in the best possible way.

Safe communication

The most modern VPN technologies are used for secure communication between private cloud and public cloud.

Sensitive data are managed on the fly by means of an encryption proxy, so that these data are never unencrypted on request outside of the private cloud. The proxy is a module described by us, which constantly changes the key and thus additionally increases the security of the data.

IoT and Industry 4.0 ready

Metrics or system data are sent from every computer instance via the IoT standard protocol MQTT to NiFi. Thus, existing or future machine-to-machine links can be operated via the same communication bus. This saves time and cost and opens the way to IoAT (Internet of Anything) or IoE (Internet of Everything).

Calculation, Analysis, Reports

Calculations, analyses, reports, and other applications are performed via Hadoop or Apache Spark applications. The required compute resources for the Hadoop applications and Apache Spark are controlled and administered by means of Hadoop 2 and especially YARN (Yet Another Resource Negotiator).

Monitoring in the Hybrid Cloud

Monitoring in the hybrid cloud is realized by means of a modern push method.

The most-used monitoring systems today such as Nagios or Icinga are pull systems and have the disadvantage that when using auto scaling, the configuration must constantly be adjusted. By means of additional tools such as check_mk, a constant reconfiguration of Nagios/Icinga is possible, but not very convenient. In our view, Nagios/Icinga is not the best option for modern systems, which use On Demand Compute.

Nagios or Icinga not even the best choice

Jonah Kowall, Research Vice President at Gartner, already explained the disadvantages of Nagios/Icinga at the beginning of 2013 in his article with the somewhat drastic heading, "Got Nagios? Get rid of it.".

Monitoring-System Riemann

In the BOSON Architecture, we use Riemann as the monitoring system.

By means of Riemann, all systems are monitored and alerting is controlled. Riemann can thereby intelligently recognize whether a load limit was exceeded as the result of a problem or whether this is only a short-term peak, such as due to the installation of an application.

Different applications and systems can be used for all other areas, such as predictive analytics, BI, metrics or Data Lake. Thereby, the respective experience of the application team and above all of the DevOps team plays a major role.

In order to ensure a growing and harmonizing process landscape in the long term, it is important that all systems and applications can interact well with each other and updates or upgrades are compatible.

The key principle of the BOSON Architecture is that all communication is carried out via a central streaming and coordination unit, such as Apache NiFi. All messages, events or other data are controlled via this component. Also, the monitoring via Riemann or the storage of metrics is carried out by us via NiFi.

Thus, each component can be replaced or updated at any time without having to stop the entire system. Through NiFi as the central coordination unit, the workflow of the business processes can be changed or expanded without influencing the system. At any time and during ongoing operation. This leads to an emergent organization of business processes.

Get in touch or send us an email at contact@MySecondWay.com